TLDR:

- The instinct to build the enforcement layer yourself is a good engineering instinct. It is just based on an incomplete picture of what the build actually requires.

- Output validation and an enforcement layer are not the same thing. Most homegrown solutions solve one, not the other.

- The first working version of Context Layer took the baseline from 7% to 42.5%. I looked at that number and decided it was not enough. Getting to 81.7% took 5-6 months. 15-18 hour days that included a full time job, with CL getting whatever hours remained.

- If enforcement is your competitive differentiation, build it. If it is the infrastructure your product needs to run on, that is a different calculation.

- The question was never whether you could build it. The question is whether that is the best use of the next several months.

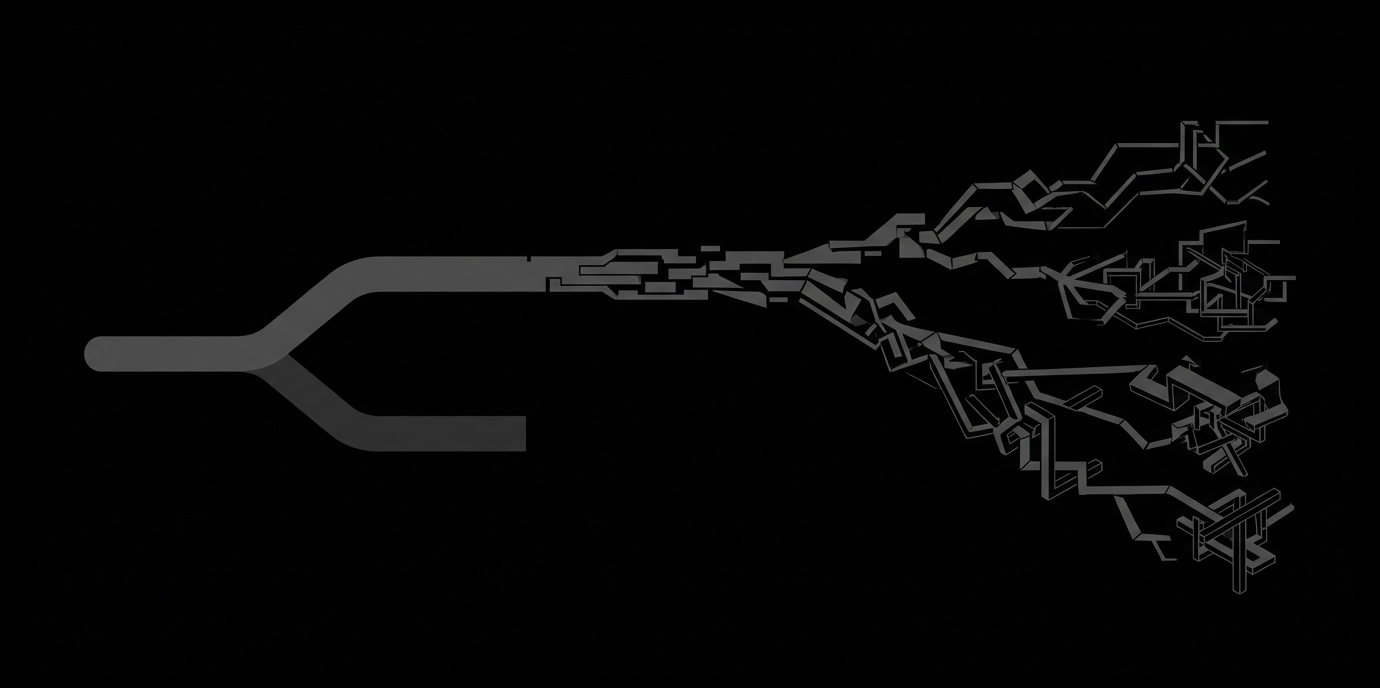

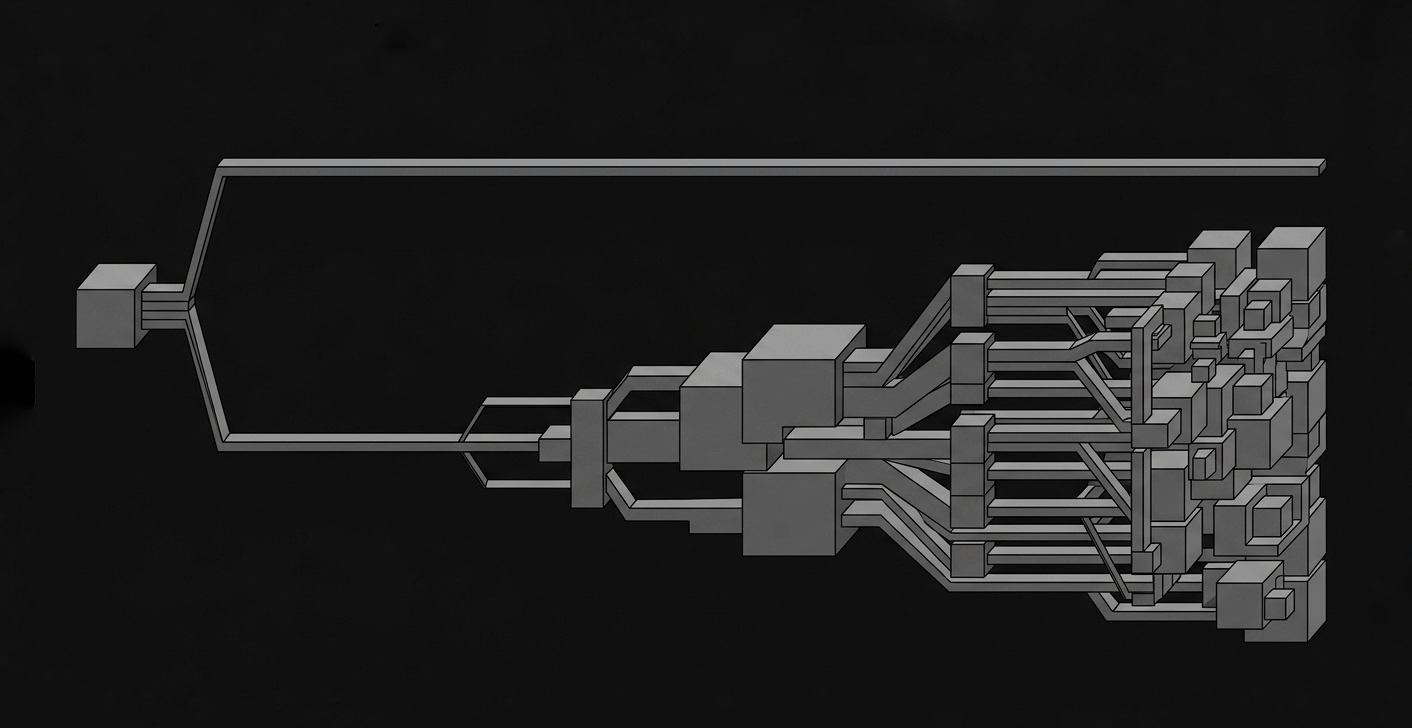

The last post ended with the enforcement layer sitting between your application and your LLM provider, owning everything the model was never designed to own. If you read that and immediately thought "I could build something like this myself," that instinct is worth taking seriously. This post is about what the complete picture looks like, from someone who built it.

The instinct to build is not wrong

You write validation logic every day. You have Pydantic schemas, Redis for state, custom retry wrappers, structured output parsers. The enforcement layer, from the outside, looks like a collection of things you already know how to build. Admission control sounds like middleware. Context assembly sounds like prompt construction. Verification sounds like output validation.

The instinct to own your infrastructure is a good engineering instinct, I will not argue against it. If something is core to how your product runs, you should understand it. You should probably control it.

What is flawed is the assumption that each of those pieces is as discrete as it looks from the outside, and that discrete pieces stay discrete once your workflow starts evolving.

What you are actually signing up for

Here is what the enforcement layer actually requires, in plain terms.

Admission control before execution. Not just authentication. The layer has to derive execution mode, project identity, and runtime permissions before a single step runs. The model never sees a request that has not cleared this. Sounds like middleware until you realize it has to be consistent across every entry point in your system, not just the one you thought of first.

Deterministic context assembly. The constraints the model sees at step 8 have to be identical to what it saw at step 1. Not approximately identical. Identical. This means owning context construction completely, independent of what accumulated between steps. This is not prompt construction. It is a deterministic assembly process that has to hold under every possible workflow state, including the ones you did not design for.

Verification independent of the model. The output check cannot involve the model. That is the entire point. Which means you need a verification engine that evaluates outputs against owned constraints without asking the system that produced the output whether it did a good job. Getting this to catch real violations without producing false positives on normal output is not a weekend task, and it is not obvious where the line is until you have seen enough failure cases to know.

Session lifecycle management. Flow sessions have to be created, tracked, ordered, and terminated correctly. Steps have to execute sequentially. Replay detection has to work so identical steps return cached responses instead of re-invoking the model. Concurrent requests have to be rejected cleanly.

Provider safety. Base URL allowlists, provider validation, credential handling that never persists keys, retry logic with exponential backoff for transient failures.

Each of these is manageable as a first version. The problem is that they are not discrete in practice. They interact. When your workflow changes, the interactions change. The second version of the enforcement layer, the one that handles your actual production workflow six months from now, is where the real cost starts showing up.

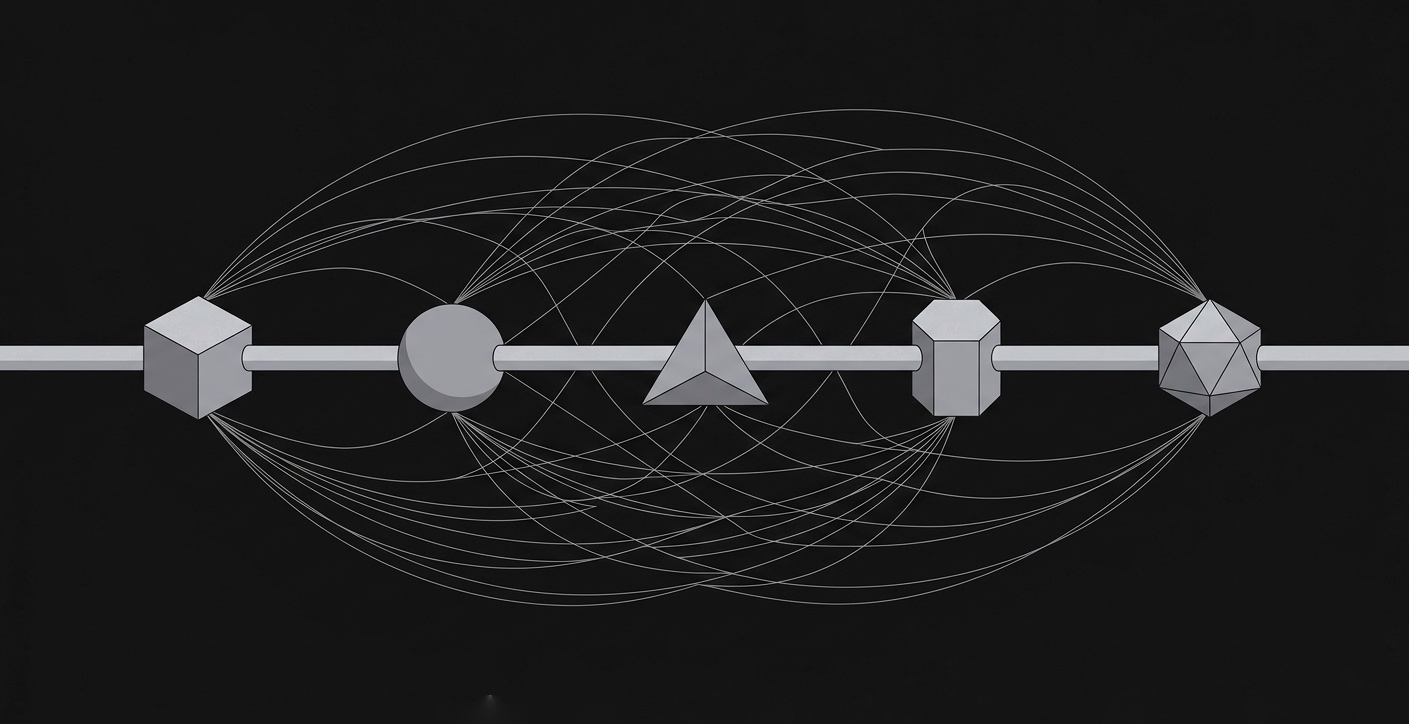

I know this because I built it. The first working version of Context Layer took the baseline from 7%, which is what direct LLM calls hit on a strict multi-step agentic workflow with no enforcement layer, to 42.5%. I looked at that number and decided it was not enough. Most people would have shipped it, and I understand why, 42.5% feels like meaningful progress when you started at 7%. But it was not solving the problem deeply enough and proving value with it was going to be difficult. So I went deeper, rebuilt the enforcement approach, and got to 70%. Shipped that publicly. Then v1.1 pushed it to 81.7%.

The model across all three numbers was GPT-4o mini. Not GPT-4o. Not a frontier model. The enforcement layer is what made a cheap model perform like reliable infrastructure.

That progression took 5-6 months of 15-18 hour days that included a full time job, leaving 3-4 hours of sleep and whatever was left in between for CL. Solo. And the hardest part was not writing the code. It was the decisions about what the enforcement layer actually needed to own versus what I could defer, and getting those wrong cost weeks each time.

That is the honest cost of building this correctly. Not a weekend. Not a sprint. Months of decisions, false starts, and rebuilds before the numbers were good enough to mean something.

What you have probably already built

If you are mid-build on your own version of this, you probably have a validation function between your workflow steps. Maybe a Pydantic schema that checks output structure. A retry loop for when the model returns malformed output. Some state management carrying context forward between steps. Possibly a judge model evaluating whether output is good enough before the next step runs.

That is real engineering and it solves a real problem. But it is not the same problem the enforcement layer solves, and that distinction matters more than it sounds.

What you have built is output validation. It checks whether the output has the right shape after the model produces it. That is one dimension of one step.

What the enforcement layer owns is different. It owns the space before the model call, not just after it. It owns context assembly so the model is working from the right inputs, not just evaluated on its outputs. It owns the session so state does not drift between steps regardless of what your application does. It owns verification that is independent of the model, not a second model call that introduces its own probabilistic behavior into your compliance check.

The line is not "your solution is worse." The line is that your solution and the enforcement layer are solving different problems. Output validation catches bad output. The enforcement layer prevents the conditions that produce bad output in the first place, and catches what slips through anyway.

The developer spending $300 a month on judge model calls is paying for output validation after the fact. The teams with custom Pydantic state schemas and checkpoint systems are doing state management without the enforcement boundary that makes state management actually hold. These are real engineering efforts. They just do not add up to an enforcement layer no matter how much you stack them.

What the real cost looks like

Time is the easy part to count. Money is also easy. The cost that does not show up on any budget is engineering time that did not go into your actual product.

"Half my time goes into debugging the agent's reasoning instead of the output." That is not a complaint about a bad model. That is a developer describing what maintaining homegrown enforcement infrastructure feels like in production. Not debugging the thing they set out to build. Debugging the layer holding it together.

The teams building explicit checkpoint systems, custom task wrappers, and retry architectures are not building these once. They are rebuilding them every time their workflow evolves. Every new step, every new constraint, every new provider integration touches the enforcement logic. Infrastructure that has to keep up with the product never finishes, and the maintenance cost never appears in the original estimate.

That is the number that does not get calculated before the build starts.

When you should build it yourself

If the enforcement layer is genuinely core to your competitive differentiation, build it. If the specific way your system enforces constraints is the thing that makes your product different from everything else in your market, owning that infrastructure is the right call. Build it, maintain it, make it yours.

If your workflow is simple enough that a validation function between steps genuinely covers what you need, use that. Not every workflow needs a full enforcement boundary. A three step workflow with loose output requirements does not need session lifecycle management and deterministic context assembly. A validation function is the right tool for that job, and you should use the right tool.

The honest question is not "could I build this?" You probably could. The question is whether the enforcement layer is where your differentiation lives, or whether it is the infrastructure your actual product needs to run on.

Most teams, if they answer that honestly, are building on top of the enforcement layer. Not competing with it.

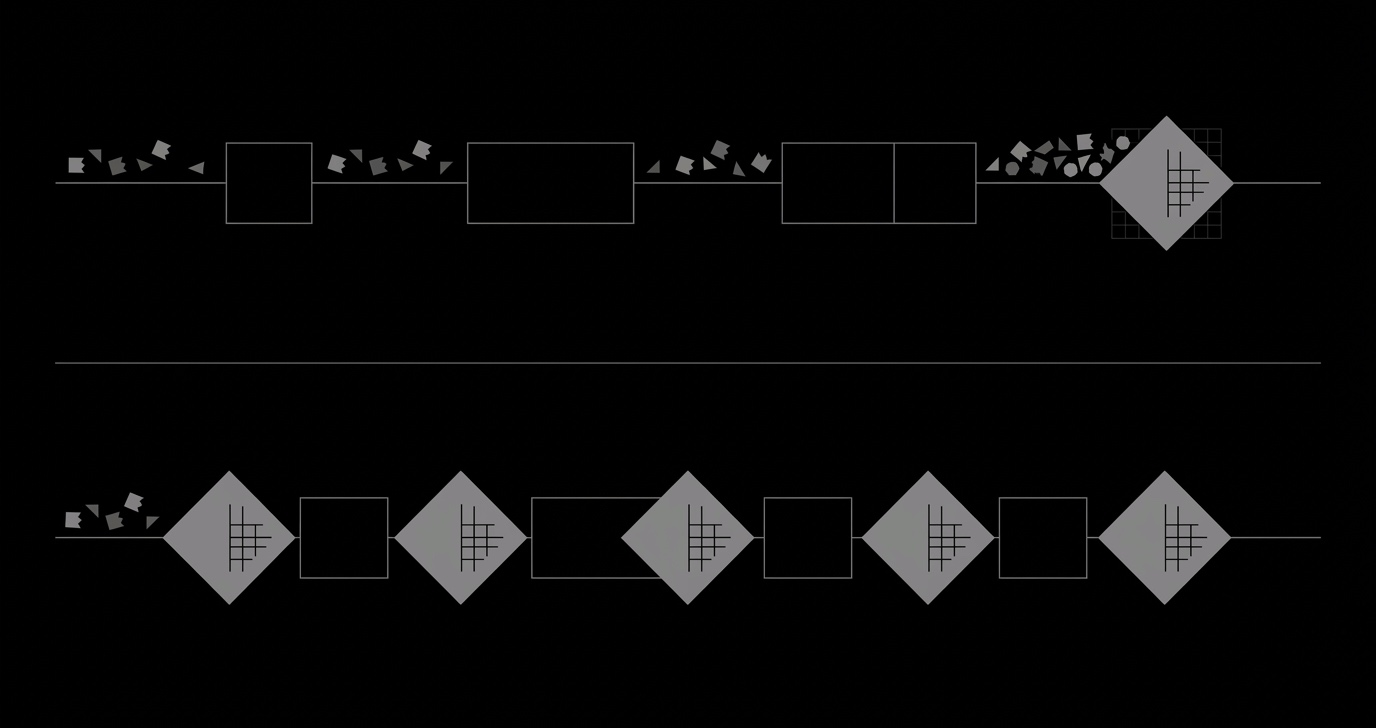

What Context Layer gives you instead

The enforcement boundary, ready. Admission control, deterministic context assembly, verification independent of the model, session lifecycle management, provider safety. Two execution modes depending on what you are building. Structured multi-step workflows with explicit step ordering and termination. Persistent conversational execution where session state does not drift.

Same model. No infrastructure to maintain as your workflow evolves. The 7% is what you get without any enforcement layer. The 81.7% is what happens when you put the right boundary around the same model and the same prompts. That model was GPT-4o mini. Cheap models become reliable enough to ship when the system around them is built to run reliably. The model was never the bottleneck.

You can provision it for free at cl.kaisek.com.

To conclude

The question was never whether you could build it. You probably could.

The question is whether that is the best use of the next several months, and whether what you build at the end of those months is actually an enforcement layer or a collection of validation utilities that you will be maintaining forever.

One of those is infrastructure. The other is overhead.