The last two posts covered what breaks and why. The math behind compounding failure. The attention mechanism that makes prompt engineering an incomplete fix. This one covers what actually solves it.

Not a better model. Not a longer system prompt. A layer that owns what the model was never designed to own.

The conflict of interest

You can't rely on the model to verify its own outputs. Not because it lacks the capability, but because it is the same system that produced the output doing the necessary checks.

When the model fails to respect a constraint at step 8, the standard practice is to ask the model to self-check and self-correct. You do this usually by adding an instruction, telling it to check its own work. But the self-check essentially runs inside the same attention distribution that caused the drift in the first place. The same positional decay that outweighed your constraint at step 8 will likely outweigh your self-check instruction at step 8 too. You are running the verification through the exact mechanism that failed and putting all the responsibility on a probabilistic method.

As I've said before, this is never a capability problem. It is a structural conflict of interest. The execution engine and the compliance check are the same thing. You would not ask a database to be its own transaction manager. You would not ask a compiler to decide whether its own output is correct. The check has to be external, or it is not a check at all.

What the enforcement layer owns

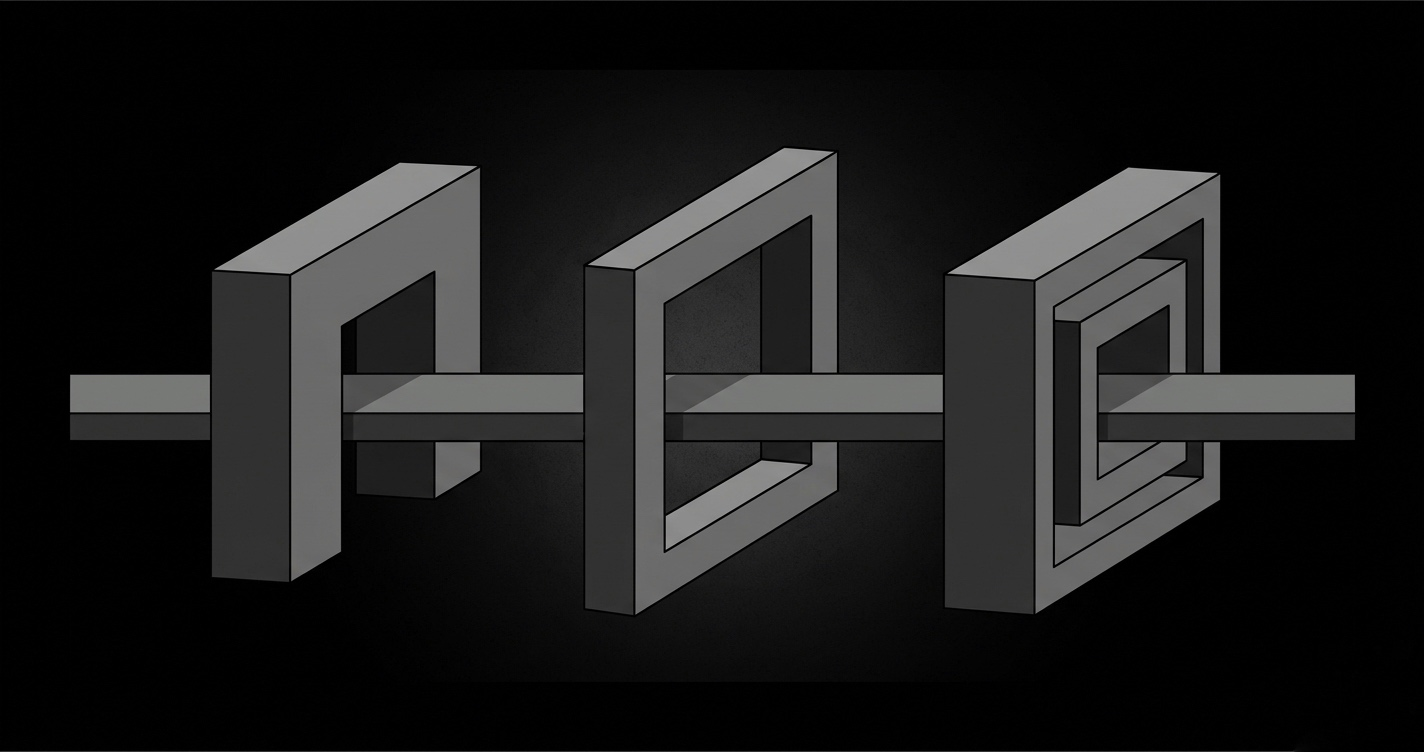

It is simple. If the model cannot own its own compliance, something else has to. That something is the enforcement layer. Three things, in plain terms.

It owns admission. Before a step runs, this layer decides whether execution should proceed. This call is independent of the model. If the preconditions for this step were not met by the previous one, execution does not continue. The model never sees the request.

It owns context. This ensures that the necessary constraints the model sees at step 8 are identical to what it saw at step 1. Not because you repeated them in the prompt. Not because you used a longer system prompt. But because something outside the model is responsible for assembling context before every invocation, deterministically, independent of what accumulated in between.

It owns verification. Once the model responds, this layer checks the output against the constraints it owns. The model is not asked whether it complied. The enforcement layer determines that independently. If the output does not satisfy the constraint, the step does not pass. The next step does not run.

This is how you make sure you're using the model for exactly the job it was designed for. Generating the next likely token given a context. The enforcement layer takes care of everything the model was never designed to do.

What this changes for the workflow

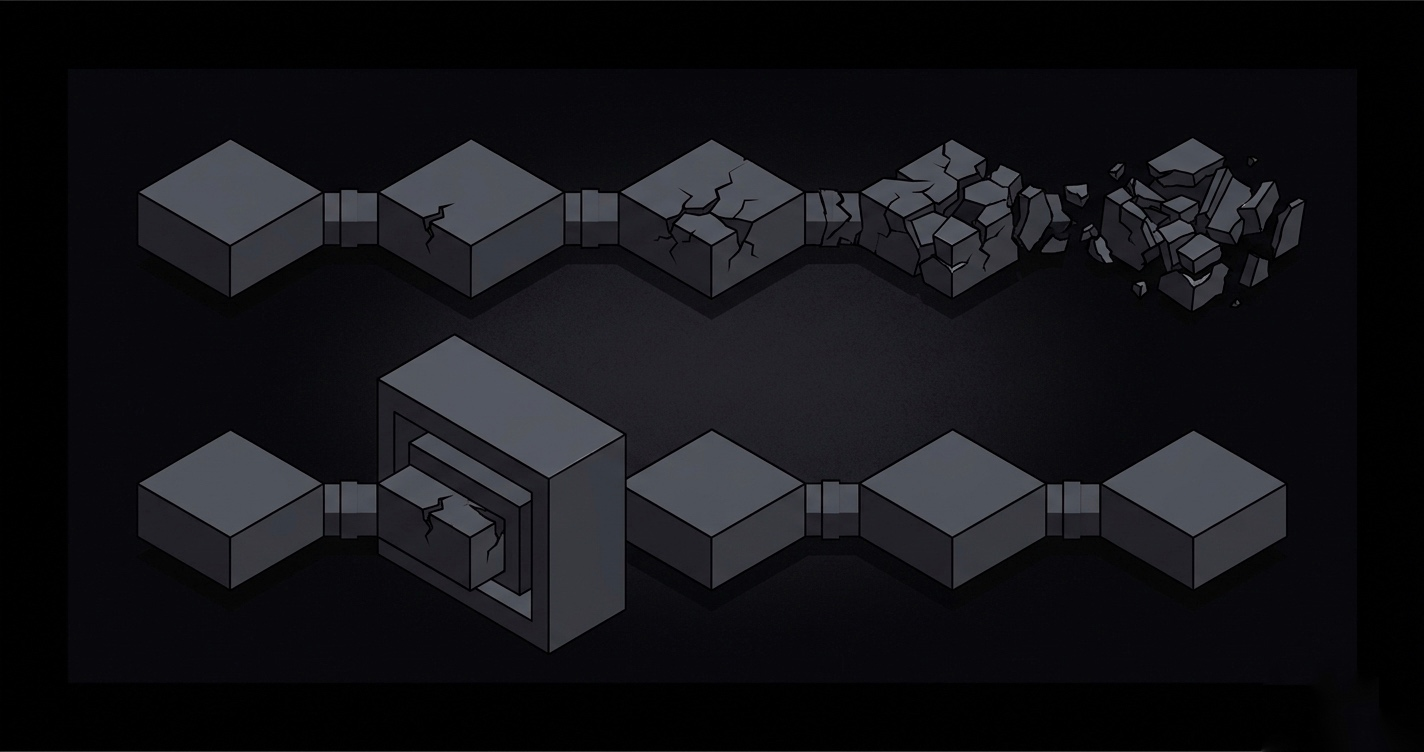

Without the enforcement layer, every step in your workflow is a probabilistic event. Step 3 produces output that looks correct. Step 4 receives it and proceeds. By step 8 you have a tower of unverified assumptions that the model built confidently, one token at a time, and nothing flagged any of it.

The compounding failure math from the first post exists because errors propagate. Step 3 drifts slightly. Step 4 inherits that drift. Step 5 builds on it. Nothing intercepts it between steps because nothing owns the space between steps.

The enforcement layer changes this by owning that space. Each step either passes the verification check or it doesn't. If it doesn't, the next step doesn't run. The drift cannot propagate because it gets caught before it becomes the next step's input. The compounding stops being a compounding problem. It becomes a single-step failure, which is a problem you can actually debug.

This is not about making the model smarter. The model is the same model. It is about making sure bad output cannot silently become the next step's input.

The numbers, with honest framing

120 runs. Strict multi-step agentic workflow. Real production constraints.

Direct LLM calls

16.4%

GPT-4o mini baseline

With enforcement layer

97.1%

Same model. Same prompts.

Same model. Same prompts. Same task. Same verifier.

I want to be straight about what this is. One benchmark, one workflow. Real-world improvement will vary depending on your workflow complexity, your constraints, your use case. I am not telling you the number will be the same for you.

What I am telling you is what changed between 7% and 81.7%. Not the model. Not the prompts. The infrastructure around them. The enforcement layer. That is the only variable that moved.

Cheap models become reliable enough to ship when the system running them is built to run reliably. That is what the number is actually saying.

What Context Layer is

This enforcement layer is what I built. It is called Context Layer.

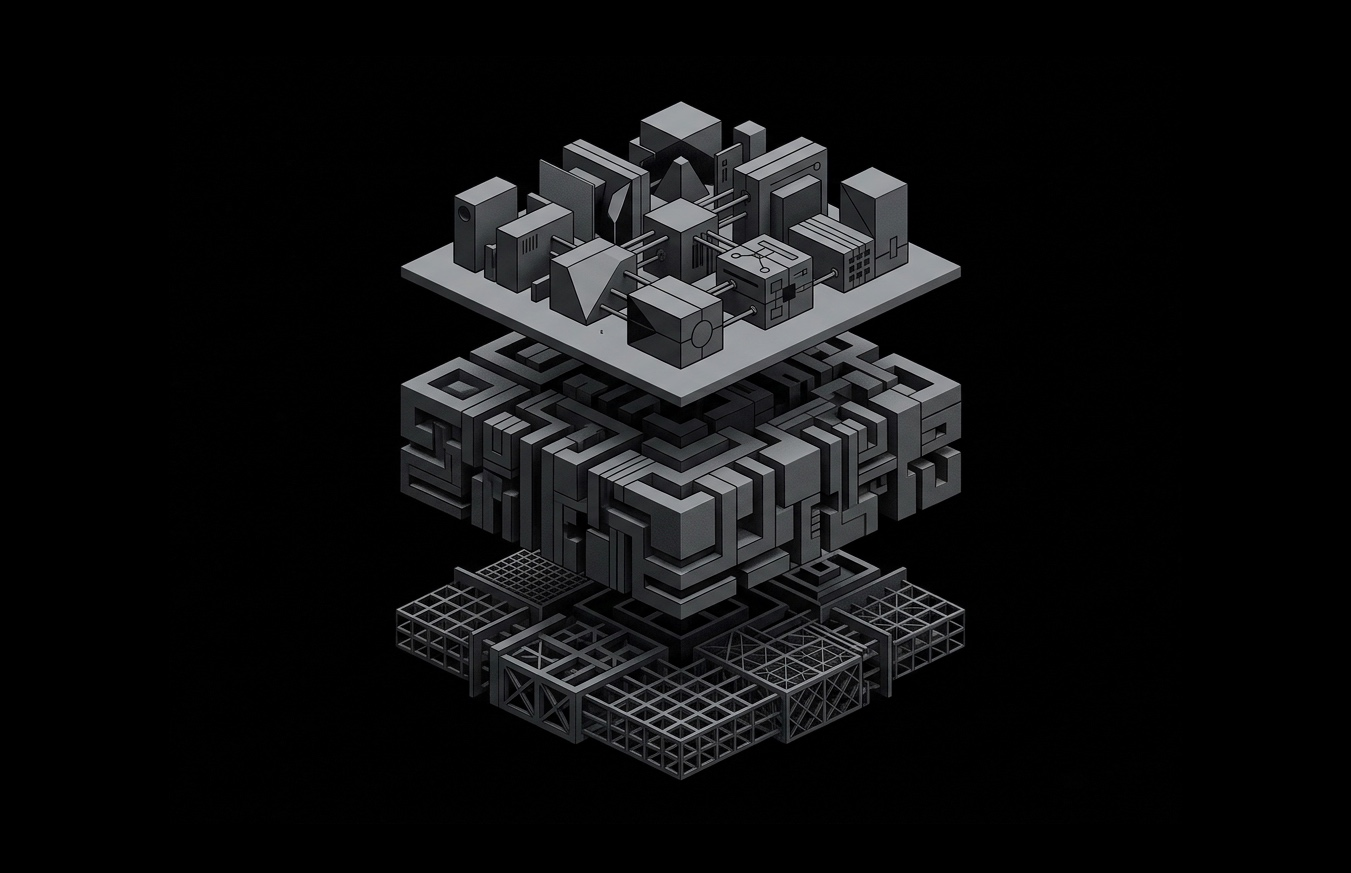

It sits between your application and your LLM provider. Your application decides what to ask. The model decides how to generate. Context Layer controls everything in between. It is not a framework. Not a prompt library. Not an orchestration tool. It is a runtime execution boundary.

It runs in two modes depending on what you are building.

The first is for structured multi-step workflows where steps need to execute in a defined order, constraints need to hold across every step, and execution needs a clear termination point. Each step is verified before the next one runs. The workflow either completes correctly or it doesn't complete.

The second is for persistent conversational execution where session state needs to evolve across messages without drifting. No explicit step ordering, but the same guarantee: context does not drift, constraints do not fade, the session stays coherent.

Both modes, one execution boundary. The model stays the model. Context Layer stays responsible for the part the model was never built to handle.

Close

The model was never the bottleneck. The infrastructure was.

Every failure pattern I documented, the drift, the loops, the fabricated state, the babysitting overhead, they were not symptoms of a weak model. They were symptoms of a system that placed the entire execution responsibility on a probabilistic token generator and expected it to hold.

It cannot hold. Not because it is not good enough. Because it was not designed for that job.

When you separate the execution responsibility from the generation responsibility, the model does not get smarter. It gets used correctly. And that turns out to be enough.

Next post covers why building this yourself costs more than you think.

FAQ

Does this mean I need to replace my existing LLM setup?

No. Context Layer sits between your application and your LLM provider. Your application stays exactly as it is. Your provider stays exactly as it is. You are not replacing anything. You are adding the layer that was always missing between them.

Does it work with any model or provider?

The benchmark was run on GPT-4o mini. The enforcement layer is not model-specific. Same model, same prompts, different infrastructure around them. That is the entire point. You do not need a better model. You need a better system around the model you already have.

What happens if the enforcement check fails?

The step does not pass. The next step does not run. The workflow does not continue on bad output. That is exactly the behavior you want. A caught failure at step 3 is a debuggable problem. A failure that propagated silently to step 8 is a 3am problem.

Is this the same as adding a validation step in my application code?

No. Application-level validation checks output format and structure. It does not own context assembly, it does not enforce constraints deterministically across steps, and it does not verify output against owned context independently of the model. You can write a regex. You cannot write an enforcement boundary in a utility function.

Do I need to change my prompts?

No. Same prompts. The 81.7% was not achieved by rewriting anything the model receives as instructions. The infrastructure changed. The prompts did not. If the fix required prompt changes it would not be an infrastructure fix, it would be prompt engineering with extra steps.