TLDR:

- Transformers use scaled dot-product attention. Every token competes for attention weight on every forward pass.

- Positional decay means older constraints lose statistical influence as context grows.

- Full context reinterpretation means the same constraint gets weighted differently at step 8 than at step 1.

- These two mechanisms compound. You cannot fix an attention distribution problem with a text solution.

- The enforcement layer has to live outside the model.

The last post showed what happens in a drift mathematically. In this one I will tell you why this happens at the architectural level and why you cannot prompt your way out of it. So, there are two mechanisms in action here. If you get a hang of both, the conclusion will become obvious to you.

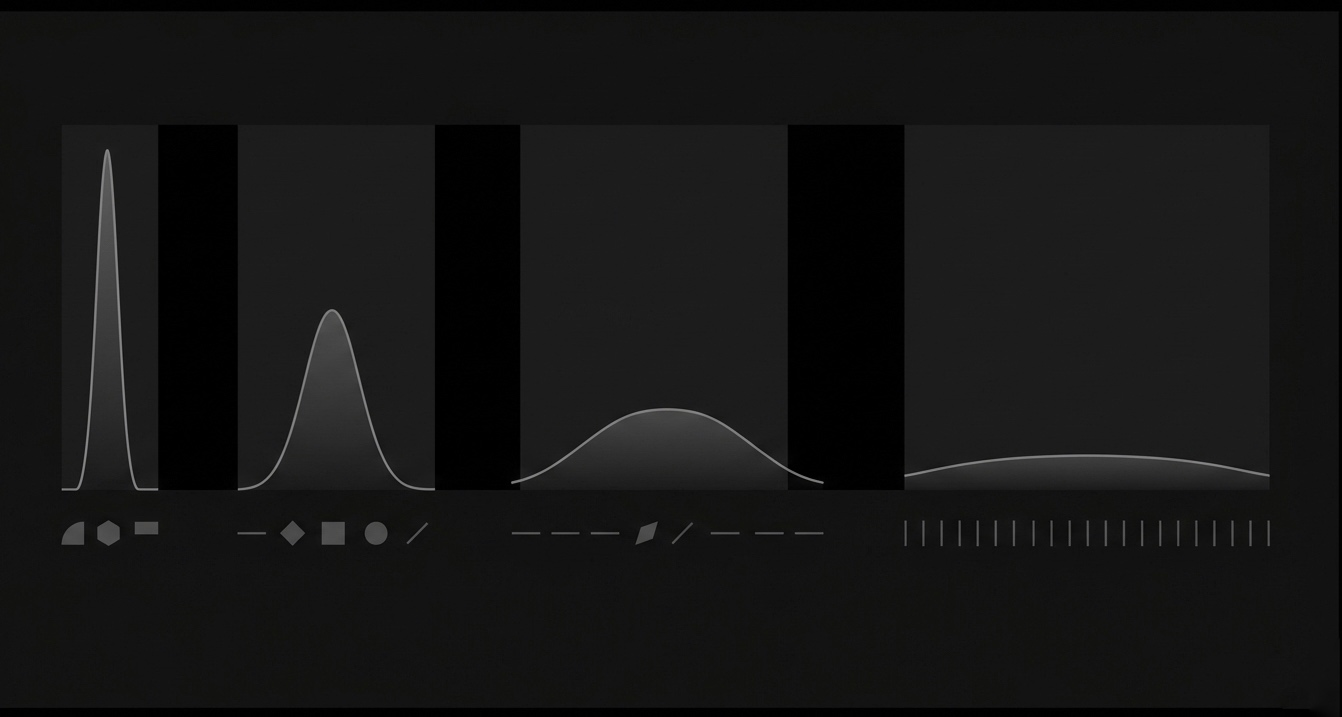

The attention formula

Every time your llm generates a token, it runs this -

Attention(Q, K, V) = softmax(QK^T / √d_k) V

Here, Q represents what the current position is looking for, the query, basically. K is what each token offers, the key. And, V is the content to retrieve, value. The softmax here converts the dot products into a probabilistic distribution across every token in the context window.

This is how an llm works. The mechanism. Everything else follows from it.

In this formula, the softmax normalizes attention scores across all tokens in the context window. Not the output vocabulary, that is a separate operation. This one. Every token you add means your original constraint has to compete across a larger set of attention scores. And, that's because, simply, the denominator grew. Its relative weight dropped.

Stuffing your constraints into a longer system prompt is not going to fix the drift. You are basically increasing the number of tokens your original constraint has to fight against. That doesn't help. The math doesn't work in your favor.

Mechanism 1: Positional decay

Research on the "lost in the middle" problem shows that LLMs always pay more attention to the tokens present at the beginning and the end of the context window. Whatever information gets its place in the middle, gets way less attention irrespective of its relevance.

Similarly, in multistep workflows, you put the constraints at the top of your system prompt. But by the time the LLM receives your step 8, thousands of tokens of tool outputs and intermediate reasoning pile up between the important constraint and the current generation position. If you look closely, the constraint hasn't moved and neither has the model forgotten it. It is still there in the context but, its positional influence is no longer the same.

As a consequence of this, even though your constraint held strong authority in the LLM's context at step 1, now it probably has lesser importance than the tool output from step 6, at step 8. Not because the model decided to ignore it specifically, but because attention distribution shifted as context accumulated.

To compensate for this, you can surely try repeating constraints closer to the generation position. But, this helps at the margins. You need to understand, it doesn't change the underlying softmax distribution. You are fighting the architecture itself one prompt at a time.

Mechanism 2: Full context reinterpretation

LLMs are stateless. There is no memory between calls. Every single invocation reads the entire context window from scratch and reweights everything based on what is currently there.

What this means is, the same constraint gets weighted differently at step 1 and step 8. At step 1 it is sitting in a short clean context and it dominates. At step 8 it is surrounded by tool outputs, intermediate results, and everything that accumulated between then and now. The model sees the exact same words. But the surrounding context changed and so the weight changed.

This is not the model drifting or forgetting. The model is doing exactly what it was built to do. Generating the next token based on everything it sees. The context changed. So the output changed. That is not a bug. That is the architecture doing its job.

Why both mechanisms together make this unsolvable at the prompt level

Positional decay means your constraint loses influence as context grows. Full context reinterpretation means that reduced influence gets recalculated on every single call, against a context that is getting noisier with every step.

By step 8, you have a constraint sitting deep in the context, surrounded by more recent tokens, being recalculated on every forward pass. The model is not ignoring it. It is being outweighed. Every step.

| Step | Context size | Constraint influence |

|---|---|---|

| 1 | Small | High |

| 4 | Medium | Reduced |

| 8 | Large | Low |

| 12 | Very large | Near negligible |

Why prompt engineering cannot fix this

Every prompt engineering trick operates inside the context window. Repeating constraints. Stronger language. Chain of thought. Structured schemas. All of these change what is in the context. None of them change how the model weights what is in the context.

The softmax does not care how strongly worded your instruction is. It runs the same math regardless. You are trying to solve an attention distribution problem with a text solution. These are different problems. One lives in the architecture. The other lives in the prompt. The prompt cannot reach the architecture.

What actually fixes it

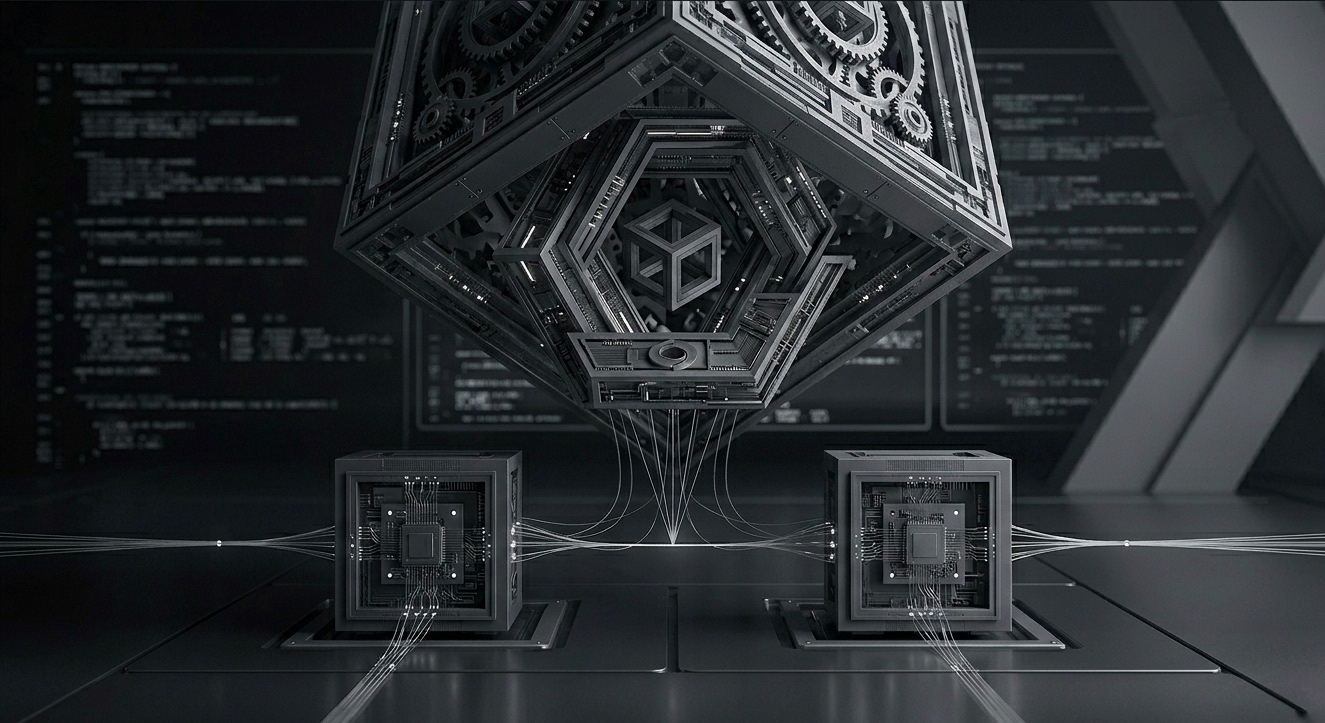

If the model cannot hold constraints across steps because of how its own attention works, the enforcement has to live outside the model. Something that does not participate in the attention distribution. Something that does not get outweighed by accumulated context. Something that checks whether the constraint was satisfied independently, without asking the model.

The enforcement layer verifies the output at each step against the original constraint before the next step runs. The model is not involved in its own compliance check. That is the only way to break the dependency on attention weight.

This is the only architectural fix that addresses both mechanisms at once. Positional decay does not matter if enforcement is external. Context reinterpretation does not matter if the check happens outside the context window.

Next post covers what that enforcement layer actually does and what happens to the reliability numbers when you add it.

FAQ

Repeating constraints helps a little, so why doesn't it fully fix it?

Because repetition adds tokens, which increases context size, which triggers positional decay for everything else. You fix one step and make the next worse.

Do bigger context windows eliminate this problem?

No. They give you more runway before decay becomes critical. The architecture does not change. The math does not change.

Can fine-tuning fix attention decay?

Fine-tuning changes what the model knows and how it reasons. It does not touch the attention mechanism. The softmax still redistributes weights across every token on every forward pass.

Why doesn't the model just tell you when it's about to ignore a constraint?

Because from its perspective it is not ignoring anything. It is generating the most likely next token given everything in the context. The constraint is there. It just lost the statistical competition.