Every AI dev knows this combination - a computer, AI, and zero control over their agents. And when these three meet in production, the anxiety starts. The workflow showed great results locally. But in production, something is off. Step 3 produces output that looks correct. Step 4 receives it and proceeds. By step 8 the workflow is producing plausible nonsense and the dev is awake at 3am trying, and failing, to find which step introduced the drift.

There is math to it.

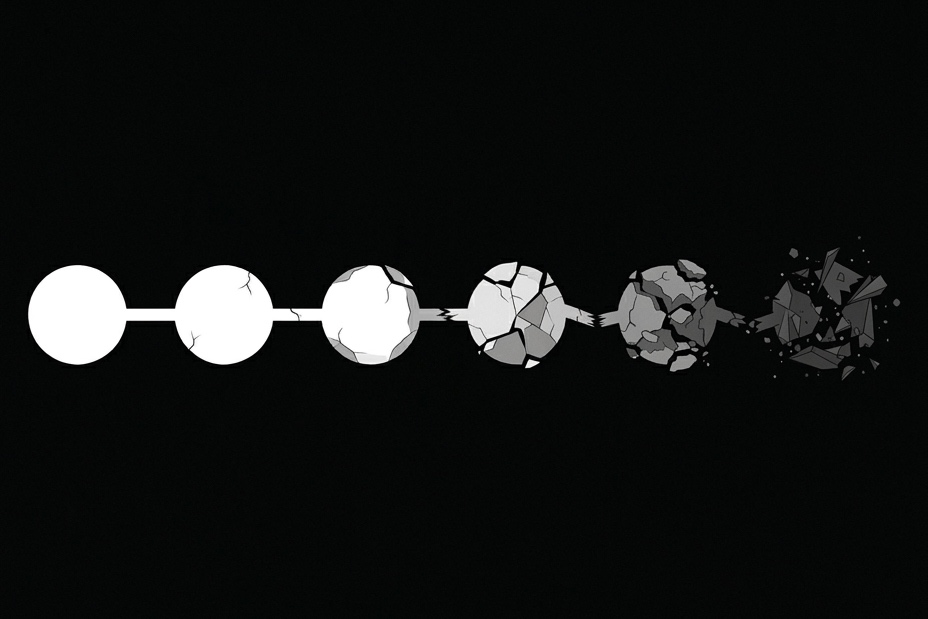

If each step in your workflow has 95% reliability, which does feel like a high bar, I won't deny, it goes down to 60% end-to-end reliability if the workflow is 10 steps long. 20 steps and you are at 36%.

P(success) = 0.95^n

n=10 → 0.598

n=20 → 0.358

n=30 → 0.215

The natural reaction is to reach for the obvious fix: better prompts, smarter models, more examples in context. This diagnosis is wrong. The compounding is not a model quality problem. It is a systems problem.

The model is doing exactly what it was designed to do

A model generates the next likely token based on the context it receives. That is its job. It has no mechanism to hold a constraint established at step 1 with equal weight at step 8. It is not designed to verify that its current output is consistent with decisions made earlier in the workflow. It is not built to refuse execution if the preconditions for this step were not met.

When you write "always follow these constraints" in a system prompt, you are asking the model to perform a function it was not built for. The model understands the instruction. But by step 8, with thousands of tokens of tool outputs, intermediate results, and accumulated context between the system prompt and the current generation, that constraint is competing with everything else in the attention distribution. Models have positional decay, they weight recent context more heavily than older context. So sometimes the constraint loses.

On the surface it looks like a failure of the model. Look closer and you will see it is a failure of the architecture the model was placed in.

What actually breaks and why

Production LLM workflows fail in four ways that compound across steps:

Constraint drift: Constraints established at step 1 degrade as context accumulates. The model does not forget them explicitly. It just weighs them less as other context grows. By step 10, a constraint that was authoritative at step 1 carries less weight than a tool output from step 6. Positional decay makes the model prioritize recent events over older ones.

State fabrication: The model needs to reference state from a previous step but that state is no longer in its context window, has been summarized away, or was never explicitly passed forward with enough emphasis. Rather than halting, the model generates a plausible, probabilistic value for the missing state. The workflow continues on fabricated inputs.

Silent semantic drift: The output at step 5 is structurally valid. It passes any schema check you put on it. But its semantic content has drifted from what step 5 was supposed to produce. Step 6 receives valid-looking input that means something different from what you intended. Nothing flags it.

Unverified assumptions: The model makes an assumption at step 3 that is not grounded in verified state. Step 4 inherits that assumption. Step 5 builds on it. By step 8 you have a tower of unverified inferences that looks exactly like a workflow result.

None of these failures produce errors. They produce confident, well-formed, plausible output that is correct given the state the model had when it produced it. But wrong in your actual reality.

What the architecture is missing

Every other production system we build has a layer between execution components that owns state verification, constraint enforcement, and output validation before the next component runs. Circuit breakers, admission controllers, pre-conditions, invariant checks. Different names, same pattern. Something external to the components owns the guarantee that execution is proceeding correctly.

LLM workflows do not have this layer. The model is expected to be both the execution engine and the integrity layer simultaneously. That is a conflict of interest by design. The model cannot verify its own outputs against constraints it is also responsible for maintaining. The check has to be external.

This is not a new problem. We solved it for distributed systems, for microservices, for databases. We just have not applied the same thinking to agents yet.

To conclude

LLMs are a system. Not a magical black box that can handle anything and everything thrown at it. On a step level they can. But zoom out and you will see how much they are being asked to hold simultaneously. That is why they drift. That is why they miss your exact intended requirements.

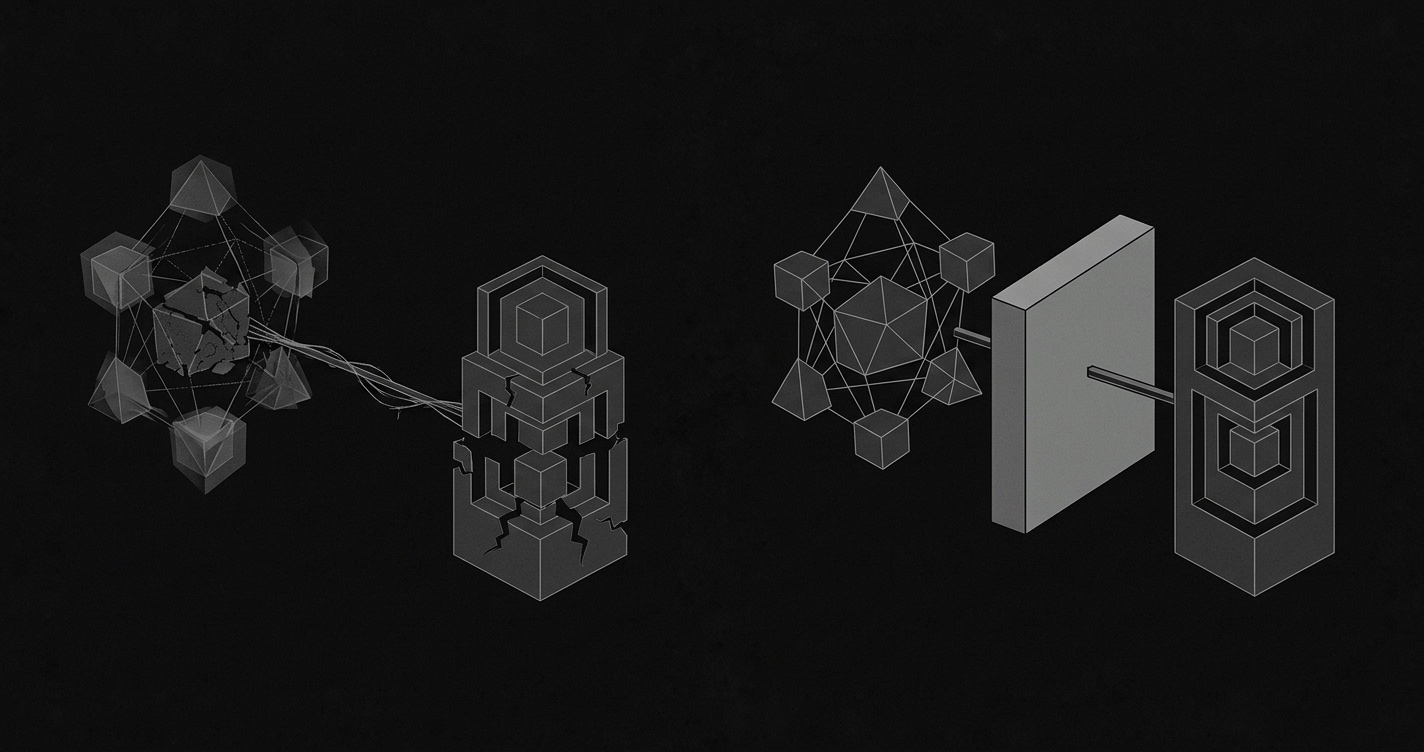

They need control. They need an enforcement layer that sits outside the model and owns the guarantee that execution is proceeding correctly at each step.

That enforcement layer exists. I built it. It is called Context Layer. It sits between your application and your LLM provider, enforces constraints at each step before execution continues, and verifies outputs against owned context across turns. On strict multi-step workflows, direct LLM calls hit around 7% success. With the enforcement layer: 81.7%. Same model. Same prompts. Different infrastructure.

It's called Context Layer. More on how it works in the next part.